Understanding Android Runtime and Dalvik

Android has undergone a lot of changes related to build process, execution environment and performance since its inception in 2007. Before we begin, let’s briefly touch JVM and what it does.

JVM

Java Virtual Machine is an engine that enables a computer to run applications that have been compiled to Java bytecode. So at its core, JVM converts java bytecode to machine code. So why is JVM not used for Android? JVM is designed for powerful systems with large amount of storage. But in the initial days of Android, storage and performance were not its strongest suites. The first android phone barely touched 200 MB of RAM. Clearly JVM was not an option here. To solve this problem, Google came up with Dalvik Virtual Machine.

DVM

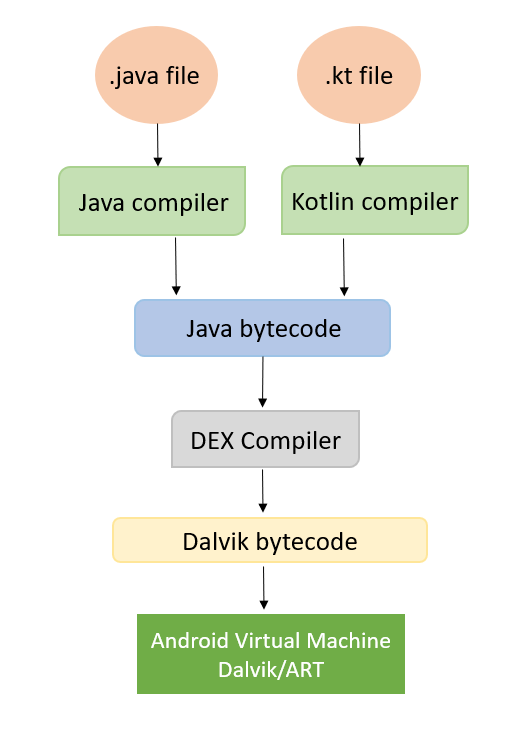

The Dalvik Virtual Machine is optimized for memory, power and battery usage to work efficiently on android devices. But DVM cannot work directly with Java bytecode generated by a Java or a Kotlin compiler. The Java bytecode needs to be converted into Dalvik bytecode. DVM then converts this Dalvik bytecode to machine code.

Primary concern of Dalvik was RAM optimization. So instead of converting the whole application bytecode to machine code in the beginning, it used the strategy called JIT or Just In Time compilation. In this strategy, the compiler compiles only parts of code that are needed at runtime during execution of the app. Since the entire code is not compiled at once, RAM is optimized but it results in a major impact on the application’s runtime performance. To tackle this problem, Google introduced ART — Android Runtime.

ART

As the hardware capabilities of Android phones improved, developers started to question the dynamic compilation strategy. Introduced in API 21 (Android L), ART used an opposite compilation strategy. Instead of using JIT, it used AOT or Ahead of Time compilation. As the name suggests, now the dex bytecode was compiled to machine code, which was saved in a .oat file before running the app. Every time the app was opened, ART loaded this .oat file eliminating the need for JIT compilation. It improved runtime performance significantly, but had 2 major drawbacks:

- Higher RAM consumption

- Longer app install and update times. (as app’s dex byte code is converted to machine code during installation)

Soon Google realized that most parts of the application are rarely used by the majority of users. Clearly, it was inefficient to hold the entire app’s machine code in memory, if most of it remained unused.

Profile guided compilation & re-emergence of JIT

In API 23 (Nougat), Google re-introduced JIT but with a tweak called Profile-guided compilation. This is a strategy that allows improving app performance with time as they run over and over. Default compilation strategy is JIT, but when ART detects that some methods are used more frequently than others, or are “hot”, ART will use AOT strategy to precompile and then cache the frequently used hot methods so that these don’t have to be compiled every time (This pre-compilation was done only when the device was charging and idle). This approach had a few advantages:

- RAM optimization — Since majority of app’s code is not used frequently, only a small amount of code is precompiled and held in RAM.

- Faster App installs — During installation, there is no data for app usage. So during installation, ART does not know which parts of bytecode to convert to machine code.

This worked great, but still had a problem. To accumulate data about “hot” methods, the app needed to run a few times. Obviously, these initial few runs of the app would mostly be JIT based, and therefore, slow. To fix this Google introduced Profiles in the cloud.

Profiles in the cloud

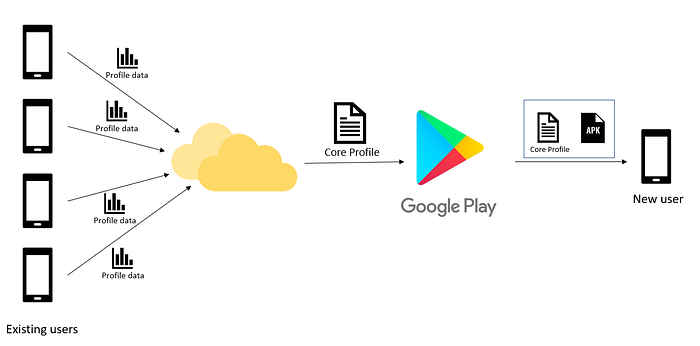

The above discussed solution would have been perfect if somehow the profile data about the most frequently used methods could be downloaded along with the app’s apk. This is exactly what Google did with Profiles in the cloud. Introduced in API 28 (Android Pie), this strategy leveraged the fact that most users use a particular application in a more or less similar fashion. Data about frequently used code parts is collected from the existing users of this application. This collected data is used to create a common core profile for this application.

Now when a new user downloads the app from Google play, this core profile is also downloaded. ART uses this file to pre-compile the frequently used code parts. As the user uses the app with time, ART collects profile data for this user and re-compiles code parts that are frequently used by this particular user.

That’s all folks!

Stay tuned for more tech articles. Tons of stuff coming up! Keep coding. Peace.